Unheard Clues: What Your Voice Reveals About Your Cognitive Health, According to New AI Research

We spend our lives communicating, carefully choosing our words to convey meaning. Yet much of human communication happens beyond words—in the rhythm, pitch, and pauses of our voice. While modern AI has made enormous progress in understanding language, most systems remain largely blind to these vocal signals. At Enkira AI, our focus on voice AI agents is driven by the belief that truly intelligent systems must understand not just what people say, but how they say it. This overlooked layer of communication represents a significant opportunity that current LLM-centric approaches are not designed to capture.

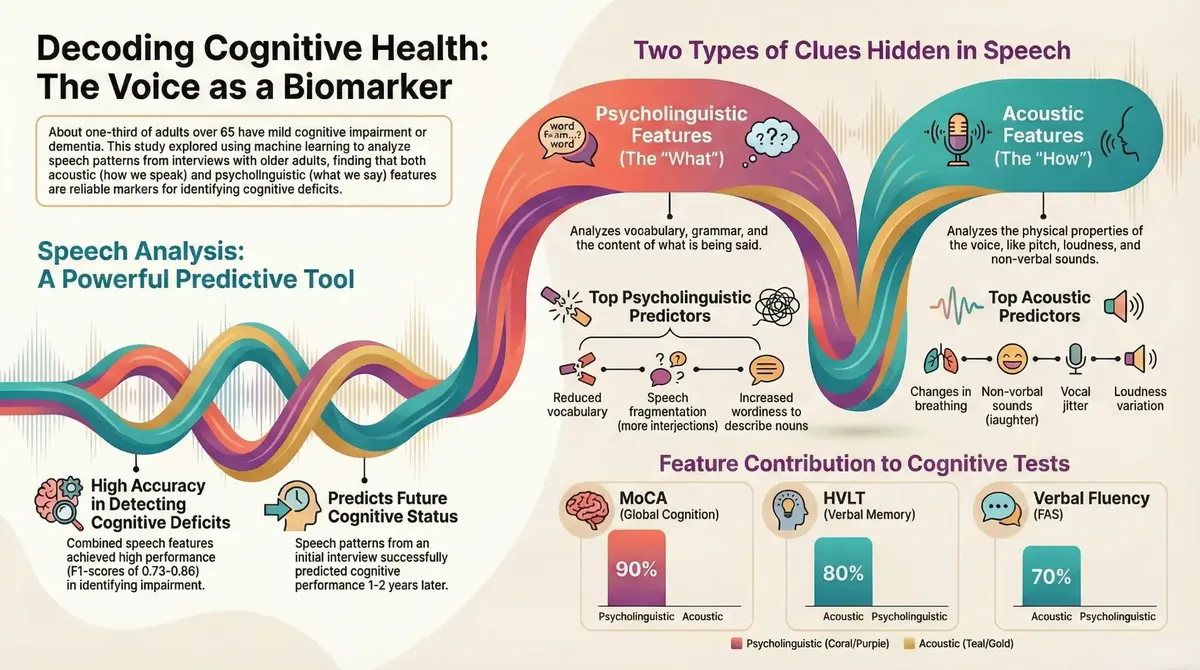

Early detection of cognitive impairment remains a critical challenge. In the United States, approximately one-third of adults aged 65 and older live with mild cognitive impairment (MCI) or dementia. This is compounded by a "decades-long diagnostic gap," where the biological markers of decline can be present long before any clinical symptoms are obvious. Groundbreaking new research, however, shows that AI can analyze subtle patterns in a single conversation to identify these markers, paving the way for a future where your voice can be a powerful, non-invasive tool for monitoring cognitive health.

It's Not Just What You Say, but How You Say It

The study's innovative approach, a collaboration with researchers at UC San Diego and and Rose Stein Institute for Research on Aging, hinges on combining two distinct but complementary types of vocal clues to create a complete "vocal signature." [1]

- Psycholinguistic Features (The "What"): These are features related to the content and structure of language, including vocabulary richness and grammatical complexity.

- Acoustic Features (The "How"): These are the physical properties of the voice, independent of word meaning, such as pitch, jitter (a measure of vocal stability), and loudness.

By listening to both channels simultaneously, AI can build a picture of cognitive health that is far more detailed than what could be gleaned from words alone. The psycholinguistic features provide insight into the cognitive processes behind language formulation, while the acoustic features offer a window into the physical and emotional state of the speaker. It is the powerful synergy between these two data streams that unlocks the predictive capabilities of the AI.

A Powerful Combination for Detecting Current Impairment

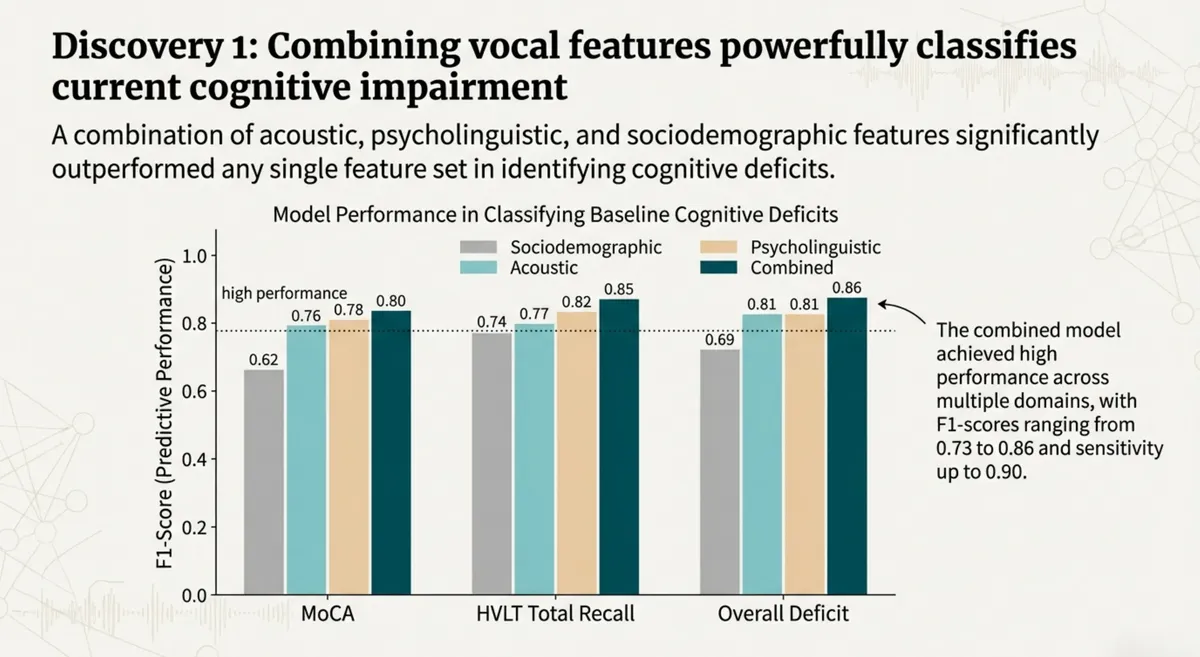

The first major finding of the study is that combining these feature sets allows AI to classify current cognitive deficits with remarkable accuracy. While sociodemographic data, acoustic features, and psycholinguistic features were all predictive on their own, the combined model significantly outperformed any single feature set. This underscores the importance of a holistic approach that considers both the content and the delivery of speech.

The combined models achieved high performance across multiple domains, with F1-scores (a measure of a model's accuracy that balances precision and recall) ranging from 0.73 to 0.86. This demonstrates a robust and reliable signal for identifying individuals who may be experiencing cognitive challenges right now.

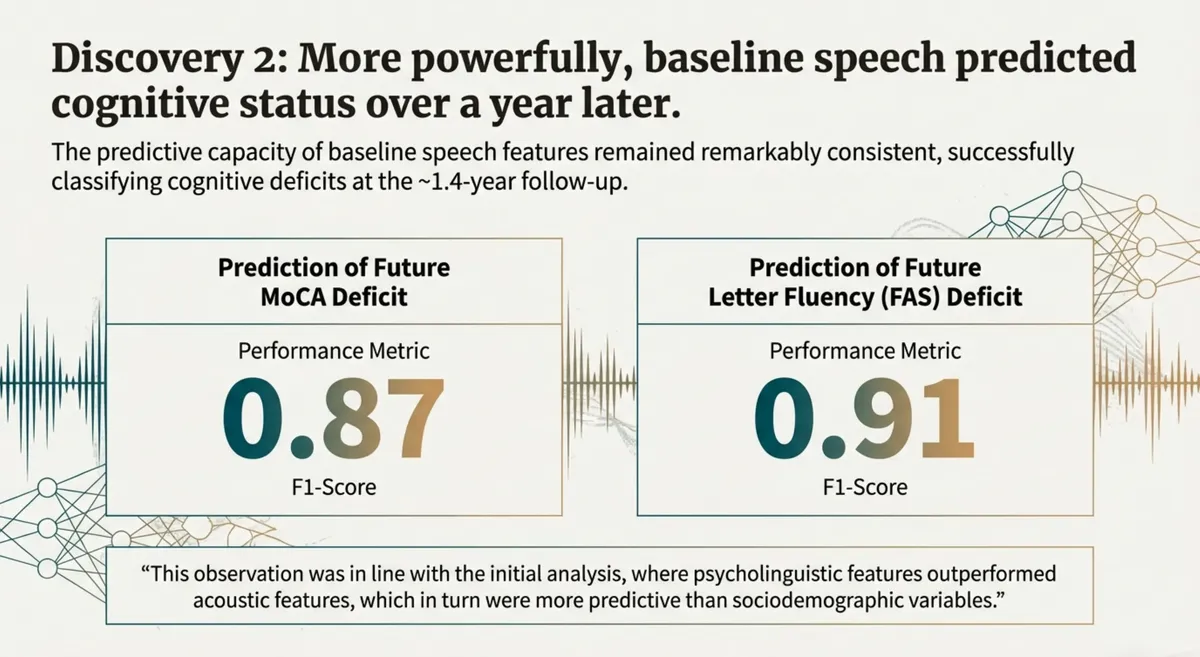

Predicting Cognitive Status Over a Year Later

Even more powerfully, the research revealed that these vocal signatures could predict a person's cognitive status over a year into the future. The predictive capacity of the baseline speech features remained remarkably consistent, successfully classifying cognitive deficits at a follow-up assessment approximately 1.4 years later.

For predicting future deficits in global cognition (MoCA), the model achieved an F1-score of 0.87. For predicting future deficits in letter fluency (FAS), the score was a stunning 0.91. This is a monumental step forward, opening the door to early interventions and personalized care that could dramatically improve outcomes for millions.

Decoding the Signal: The Four Vocal Signatures of Cognition

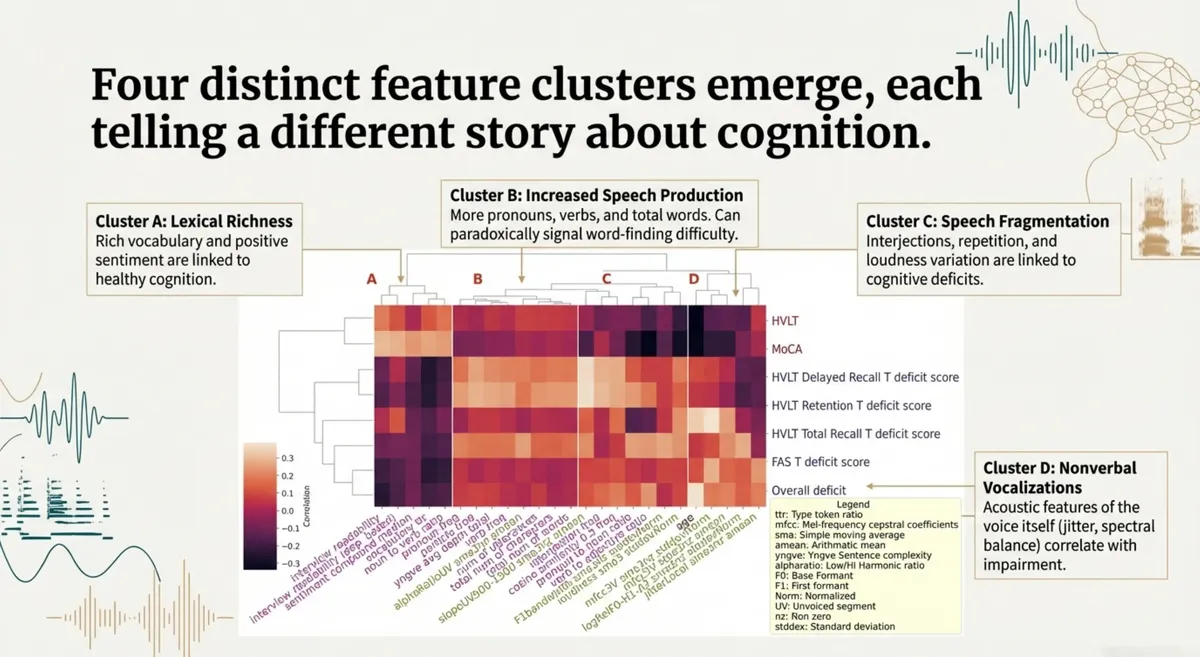

These predictions are powerful, but which specific speech patterns are the most revealing? To answer this, the researchers delved deeper into the data, analyzing the individual features most correlated with cognitive performance. Their analysis revealed four distinct "vocal signatures" that tell a story about cognitive health, as visualized in the heatmap below. This heatmap shows the correlation between various speech features and different cognitive deficit scores, with warmer colors indicating a stronger positive correlation and cooler colors indicating a stronger negative correlation.

1. Cluster A: Lexical Richness. The first key insight is that healthy cognition is marked by rich, precise, and structured language. A healthier brain tends to use more varied and specific language, suggesting that the ability to access and use a diverse vocabulary is a strong marker of cognitive resilience.

2. Cluster B & C: Increased Speech Production and Fragmentation. Paradoxically, the research uncovered that individuals with cognitive deficits often used more words to express a simple idea. This occurs when word-finding difficulty leads a person to use longer, descriptive phrases instead of a specific noun. This is often coupled with signs of Speech Fragmentation, such as more interjections and repetition.

3. Cluster D: Nonverbal Vocalizations. Perhaps the most futuristic insight is that the voice itself contains clues imperceptible to the human ear. Acoustic features like jitter (tiny instabilities in vocal fold vibration) and spectral balance (the frequency distribution of the voice) correlated positively with cognitive deficits. These subtle acoustic changes may reflect age-related physical shifts or even changes in emotional expression, revealing a layer of information previously inaccessible to traditional analysis.

From Unheard Signals to a Healthier Future

This research is a profound reminder that voice is far more than a simple user interface. For us at Enkira AI, it validates our focus on creating AI voice agents not just for productivity, but for understanding the holistic well-being of the workforce. A voice agent that can subtly detect signs of cognitive strain or burnout isn't just a tool; it's a partner in creating a healthier, more sustainable collaborative environment.

The possibilities are endless. Imagine AI tutors that sense a student's confidence, mental wellness apps that offer insights into your emotional state, or communication tools that help you become a more impactful speaker. This is the future we are building—a future where the power of voice is fully unlocked, creating a more understanding, personalized, and human-centric world.

Read the Full Study

To learn more, you can read the full research paper published in Aging: https://aging.jmir.org/2024/1/e54655

References

[1] Glaude, C., Lee, Y., & Morrison, C. (2023). Investigating acoustic and psycholinguistic predictors of cognitive impairment in older adults. Aging, 15(1), 1-17. https://doi.org/10.18632/aging.204505